WRONG

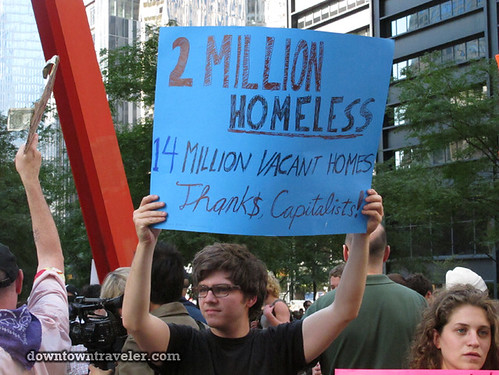

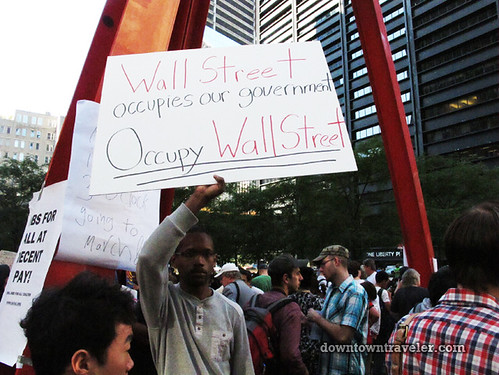

Capitalism isn't destroying America. Capitalism made America great! Don't forget that. What is destroying America is the fact that capitalism has influence over government. Government is the only institution that we allow to forcibly control our lives. It is a social contract that we all enter in which we agree to submit. We agree to abide by the rules in return for certain shared privileges: nation defense, clean air, clean water, respect of property, domestic safety. WALL STREET SHOULD HAVE NOTHING TO DO WITH THIS. It is when Wall St. and the rest of the 1% use their dollars to buy votes that things go down hill. Here are some signs that really hit the core of the issue:

No comments:

Post a Comment